This thread explains how Z.ai debugged GLM-5 serving issues that appeared only under high-concurrency, long-context coding-agent workloads, and what fixes restored correctness and improved throughput.

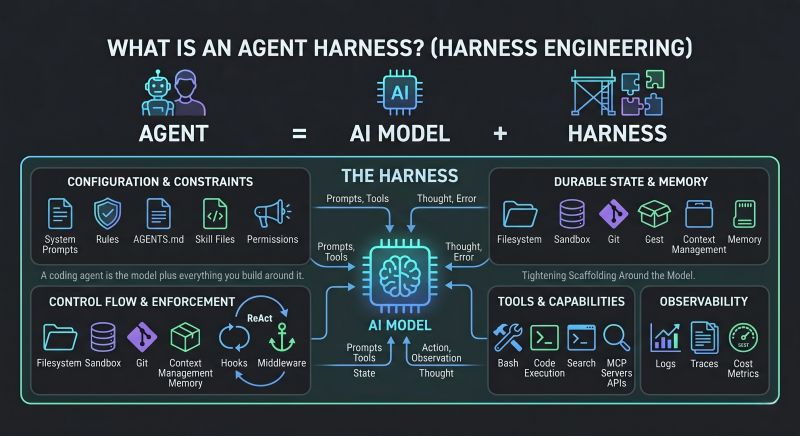

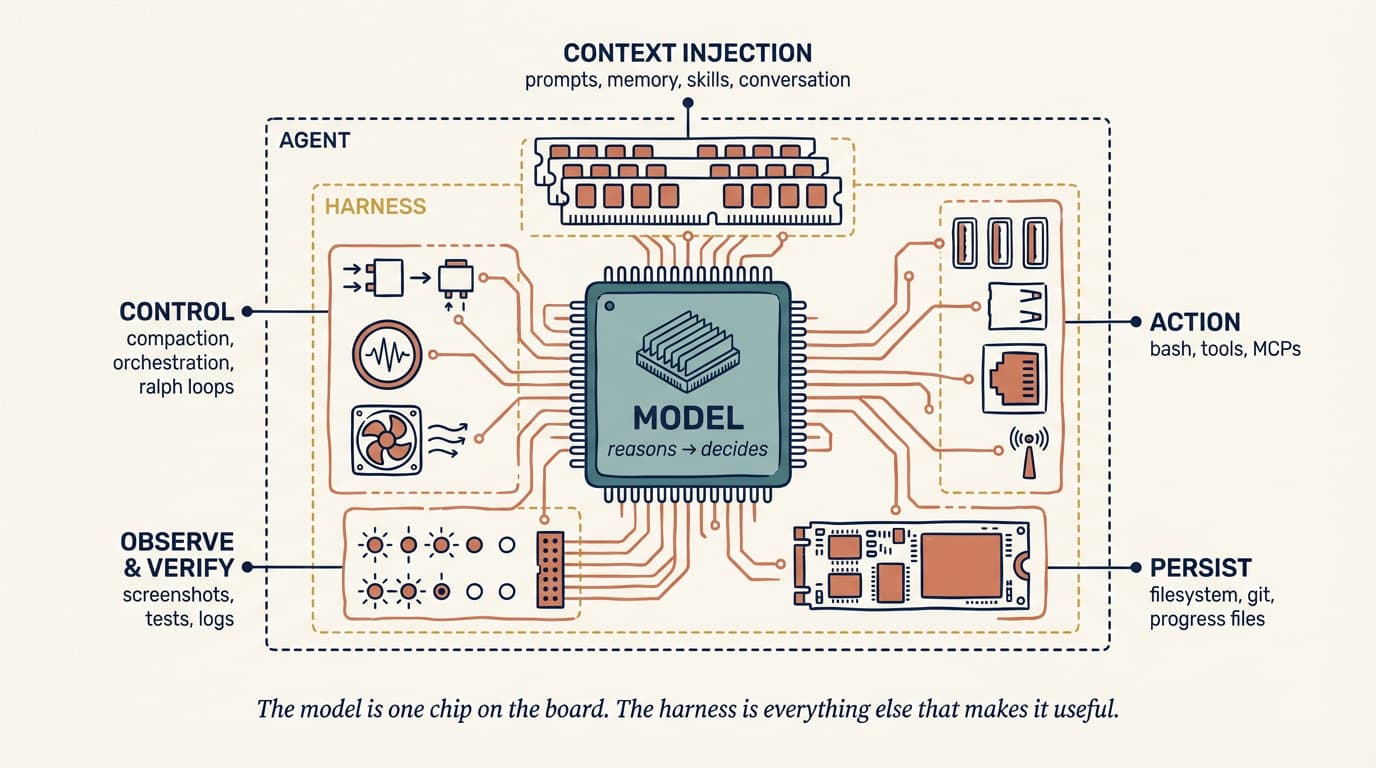

Core production reliability challenge

Scaling laws continue to increase model capability, but production reliability depends on fixing infrastructure-level scaling pain, especially around KV cache correctness and synchronization.

Reproducing anomalies under real load

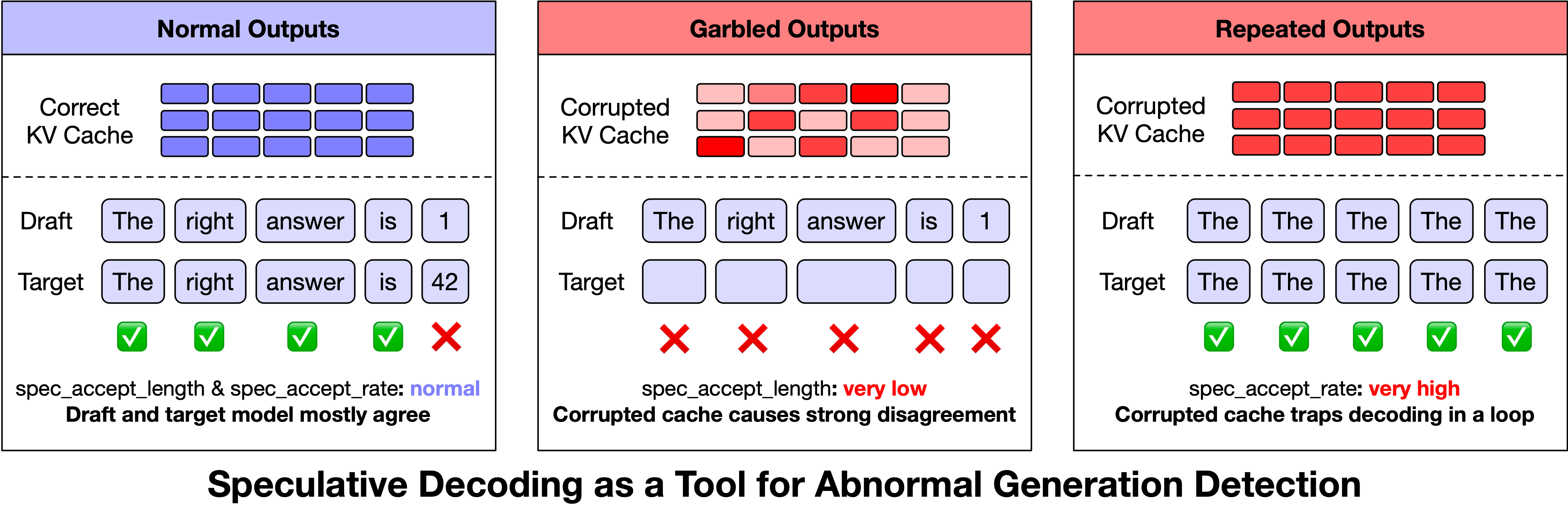

- Observed anomalies: garbled outputs, repetition, rare-character generation.

- They appeared only under high-concurrency and long-context coding-agent traffic.

- Speculative decoding metrics became detection signals: very low

spec_accept_lengthand very highspec_accept_rate. - A practical online guardrail was added to terminate and retry suspicious generations based on these metrics.

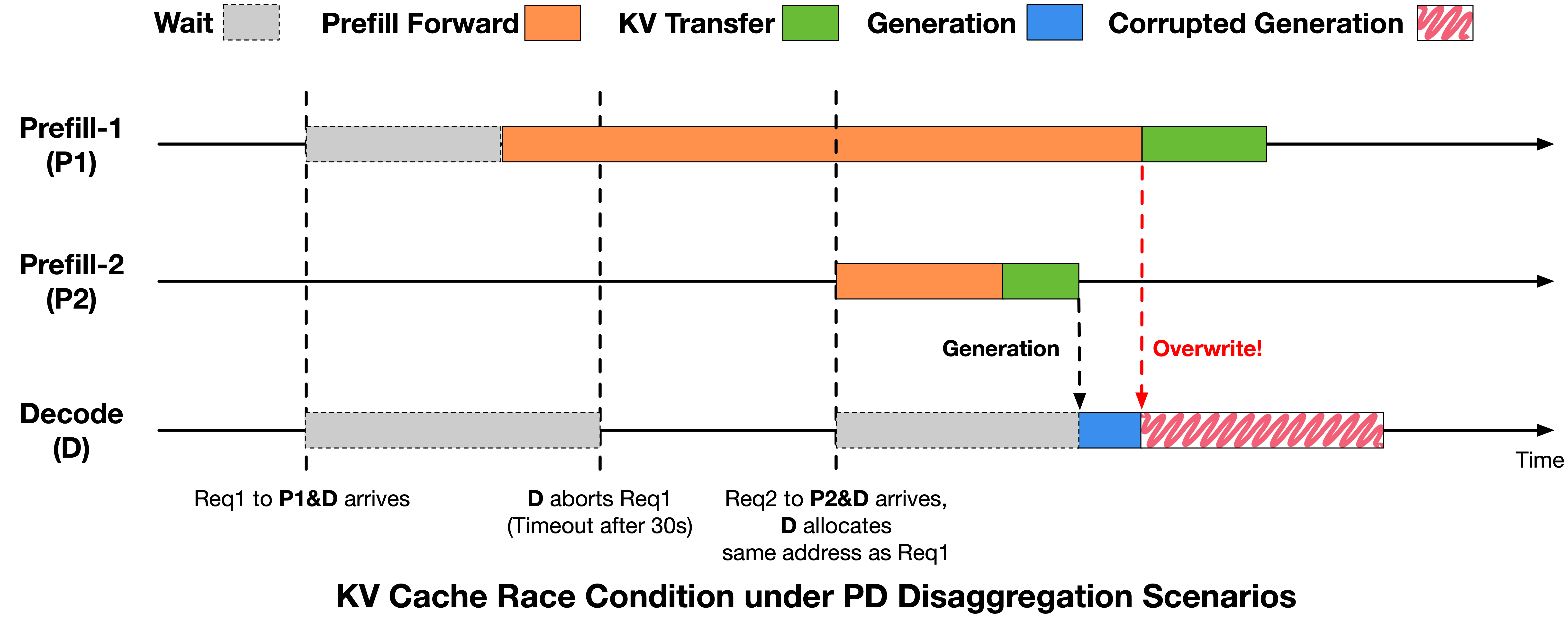

KV Cache reuse race under PD disaggregation

Decode could abort a timed-out request and reclaim KV cache while Prefill-side writes were still in flight, creating cross-request cache corruption.

Fix: reclaim and reuse KV cache only after Prefill confirms writes are safe (none started or all completed). Reported anomaly rate dropped from about 0.1% to below 0.03%.

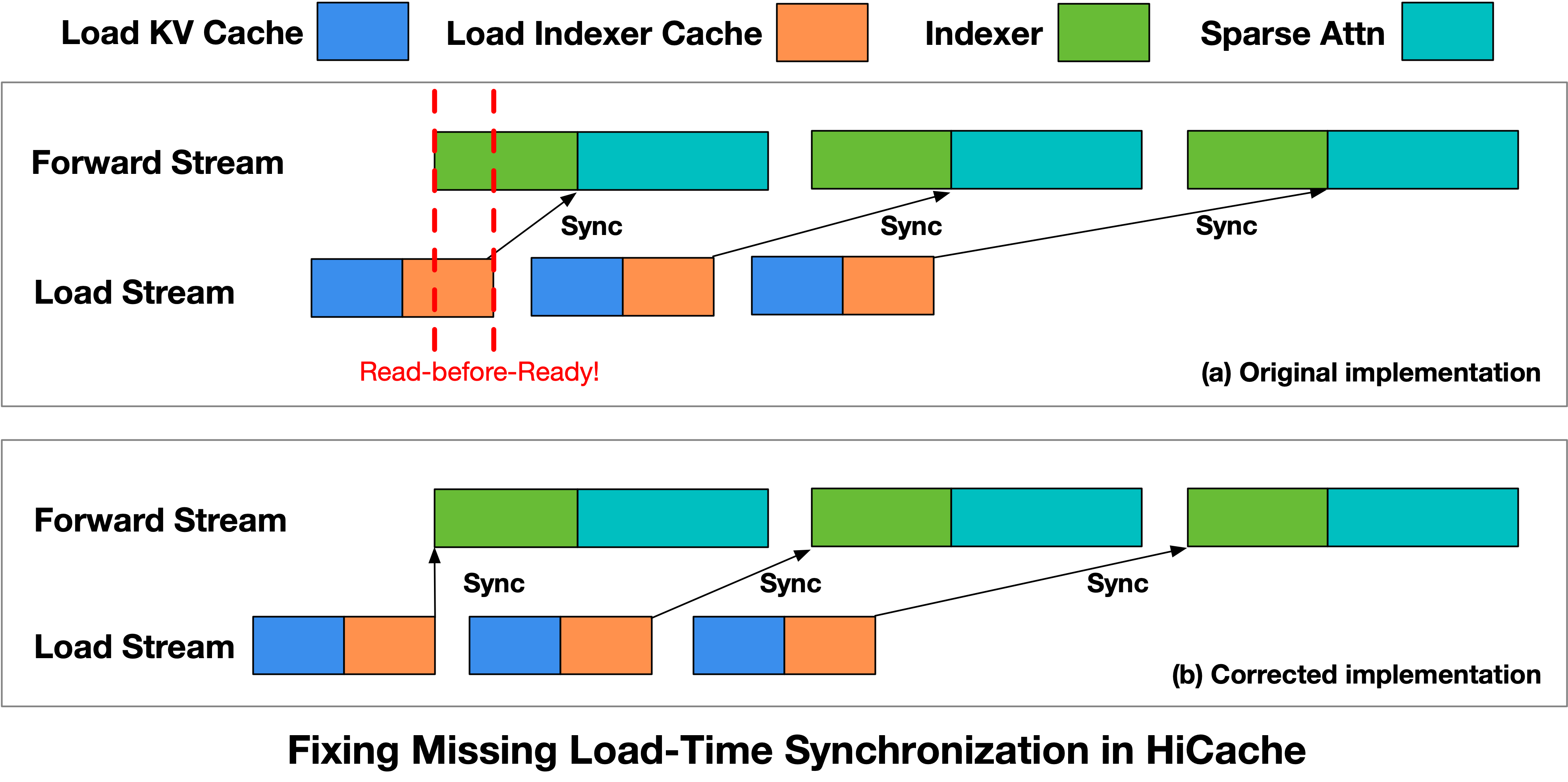

HiCache read-before-ready issue and synchronization fix

Async cache loading improved throughput, but Forward could start before indexer cache was ready, causing read-before-ready behaviour and downstream abnormal outputs.

Fix: explicit synchronization barrier before launching the indexer kernel; this was submitted upstream to SGLang (PR #22811).

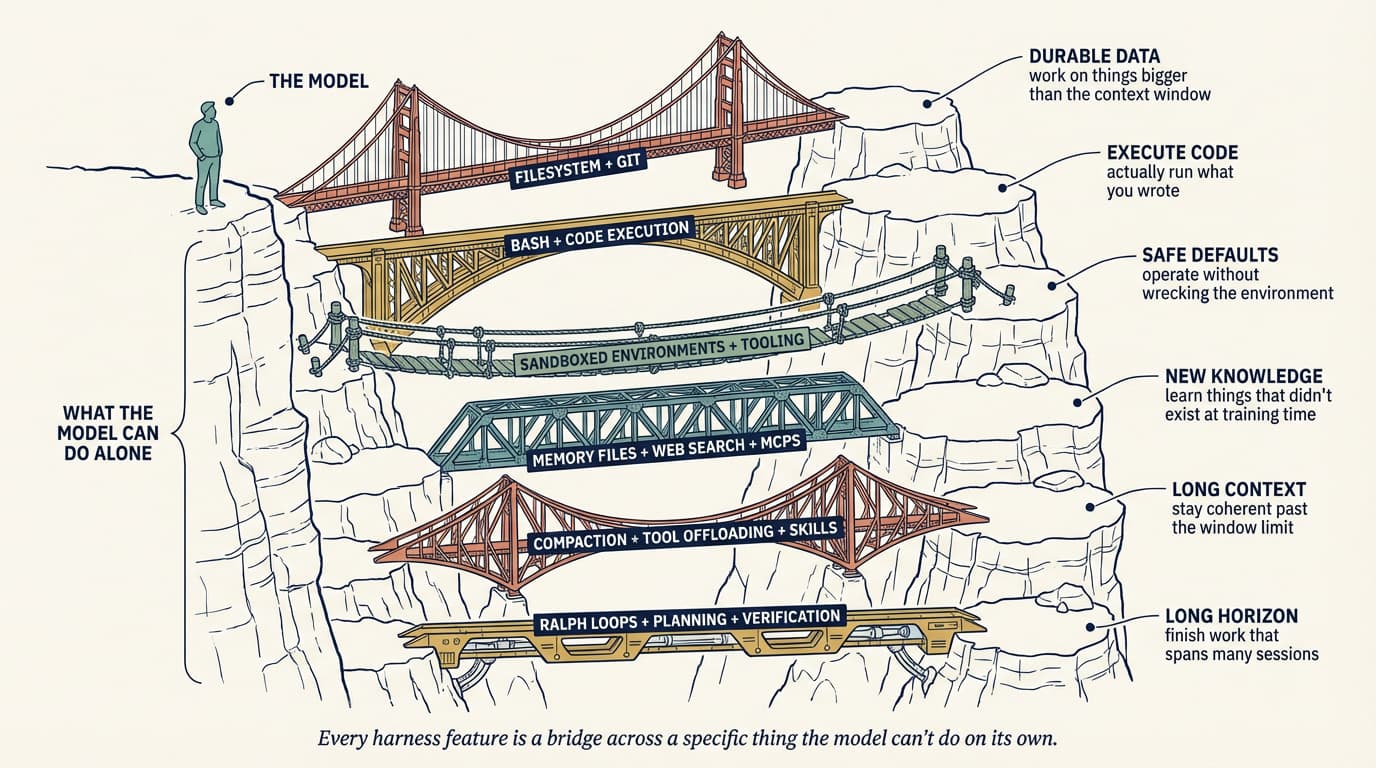

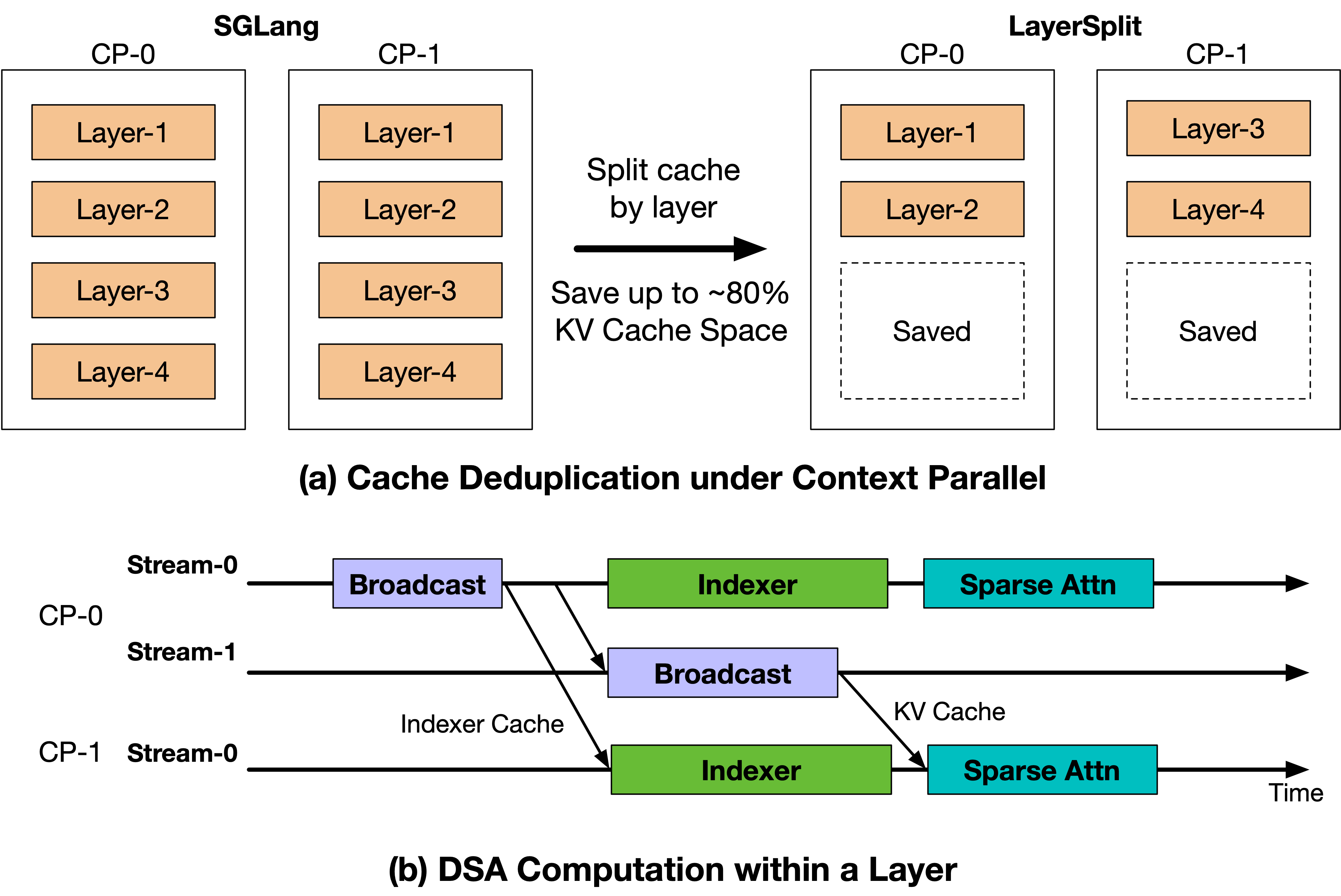

LayerSplit for long-context serving bottlenecks

After correctness fixes, the next bottleneck was Prefill throughput and memory pressure. LayerSplit stores only layer subsets per GPU and overlaps KV broadcast with indexer computation.

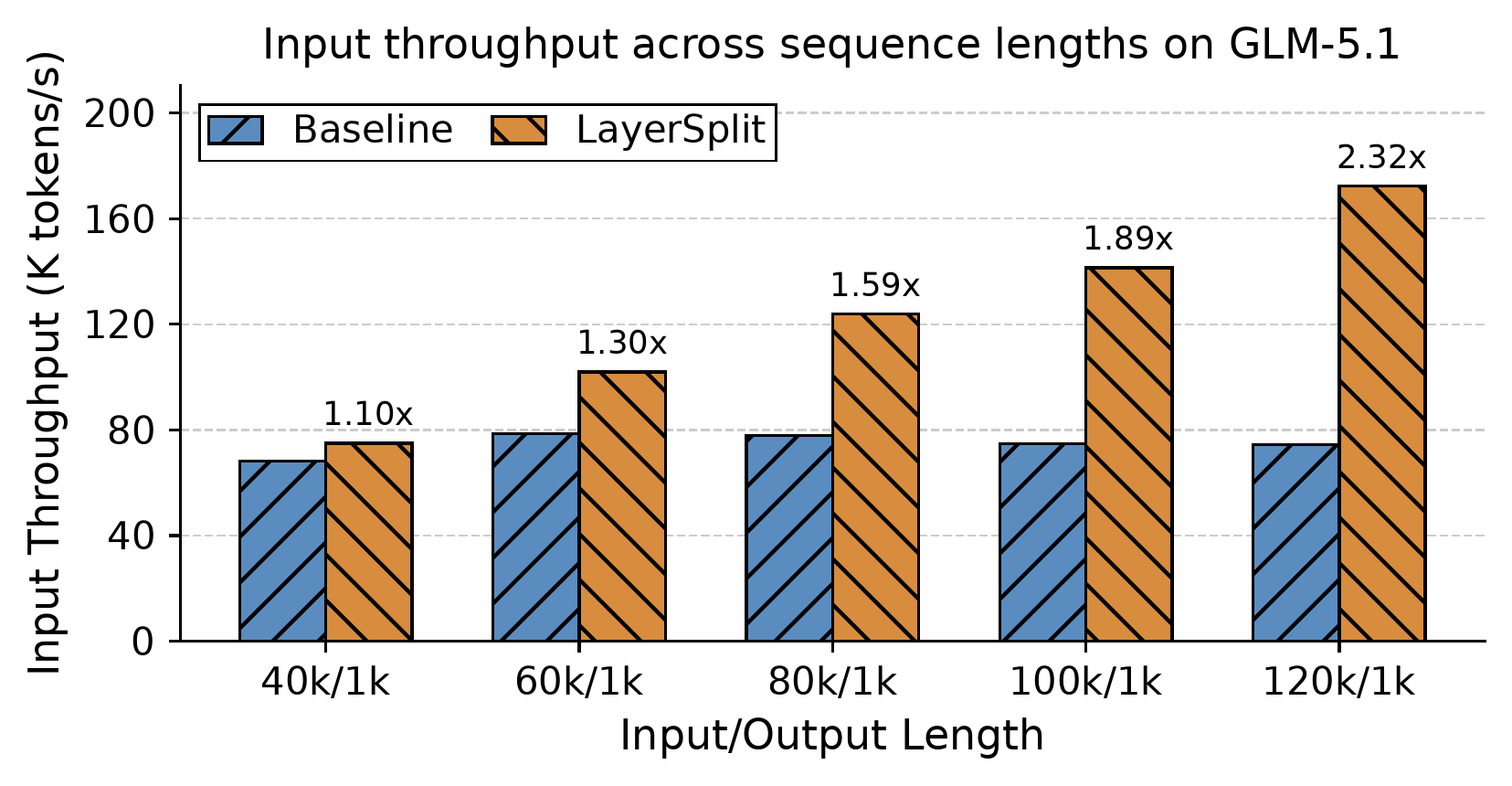

Reported result: throughput improvement ranged from 10% to 132% as context length increased under high cache-hit conditions.

Reliability principles for scaled inference

For large-scale agent serving, throughput, latency, and availability are not enough; infrastructure must also preserve model-state correctness for every generation.