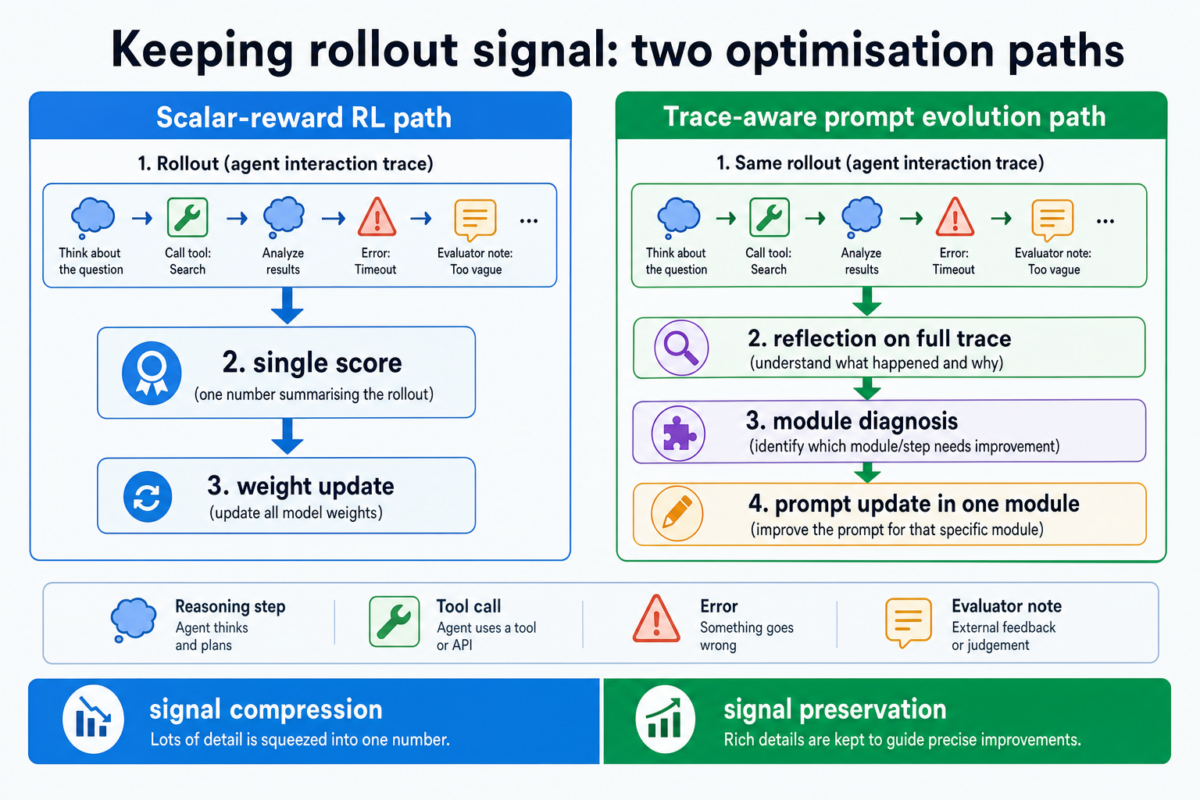

Many agent teams are already collecting rich rollout traces: reasoning steps, tool calls, errors, and evaluator notes. Yet optimisation workflows often treat this information as if it were only a final score.

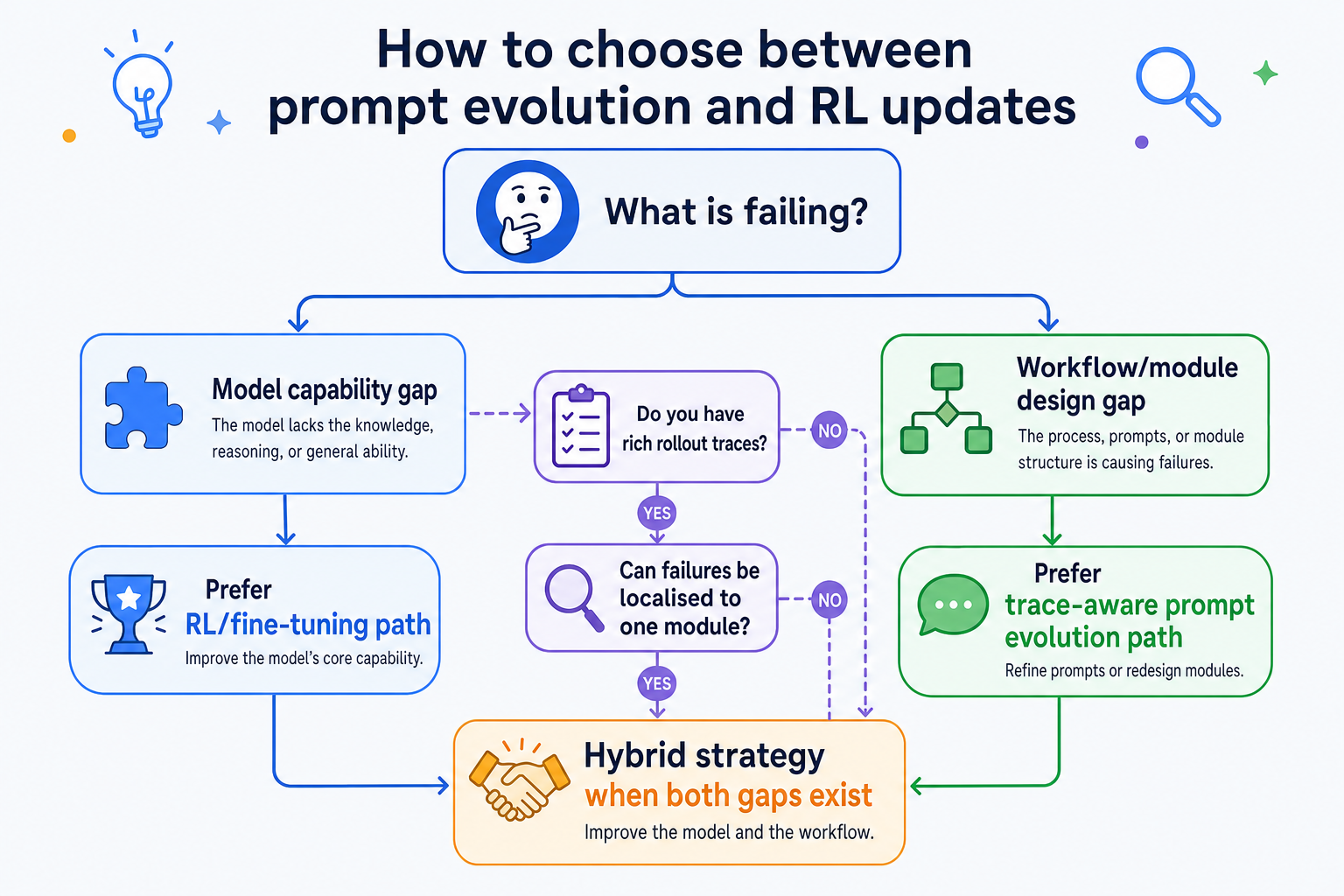

This post explains a practical shift: when to use reinforcement learning updates such as GRPO, and when reflective prompt evolution like GEPA can deliver faster gains with fewer rollouts.

Why this matters for production agent systems

In production, rollouts are expensive. They cost model calls, tool calls, latency budget, and engineering time. If your optimiser extracts only a thin signal from each rollout, you often need far more samples to converge.

This is the core business question behind GEPA versus GRPO: how much useful learning signal do you keep from each trajectory?

The key difference is not “RL good” versus “prompts good”

A clearer framing is signal compression versus signal preservation.

- GRPO-style optimisation updates policy weights using reward-driven reinforcement learning objectives.

- GEPA-style optimisation keeps richer trajectory context and uses reflective analysis to evolve prompts in specific modules.

So this is not a binary replacement story. It is about choosing the right optimisation substrate for your bottleneck.

What the GEPA paper reports

The GEPA paper (accepted at ICLR 2026, oral) reports that reflective prompt evolution can outperform GRPO baselines across its evaluated tasks, while using substantially fewer rollouts in those experiments. It also reports strong gains over prior prompt optimisation baselines.

The practical takeaway for teams is not to copy benchmark numbers blindly, but to test whether your own trace quality is high enough for reflection-driven optimisation to work.

Where GEPA-style optimisation often helps first

- Multi-module agent pipelines where one component is clearly underperforming.

- Workflows with rich failure traces and interpretable evaluator feedback.

- Teams that need faster iteration without heavy weight-training infrastructure.

- Situations where the model appears capable, but instruction design and module coordination are weak.

In these cases, prompt-space evolution can localise changes, preserve readability, and improve sample efficiency.

Where GRPO or other weight updates are still the better choice

- The base model genuinely lacks task capability.

- The task demands policy-level behavioural shifts that prompt updates cannot sustain.

- Your reward and evaluation pipeline is robust enough for RL-centric optimisation.

DeepSeekMath’s GRPO results are a strong reminder that RL-based methods remain highly effective for capability improvement, especially when objective rewards are available.

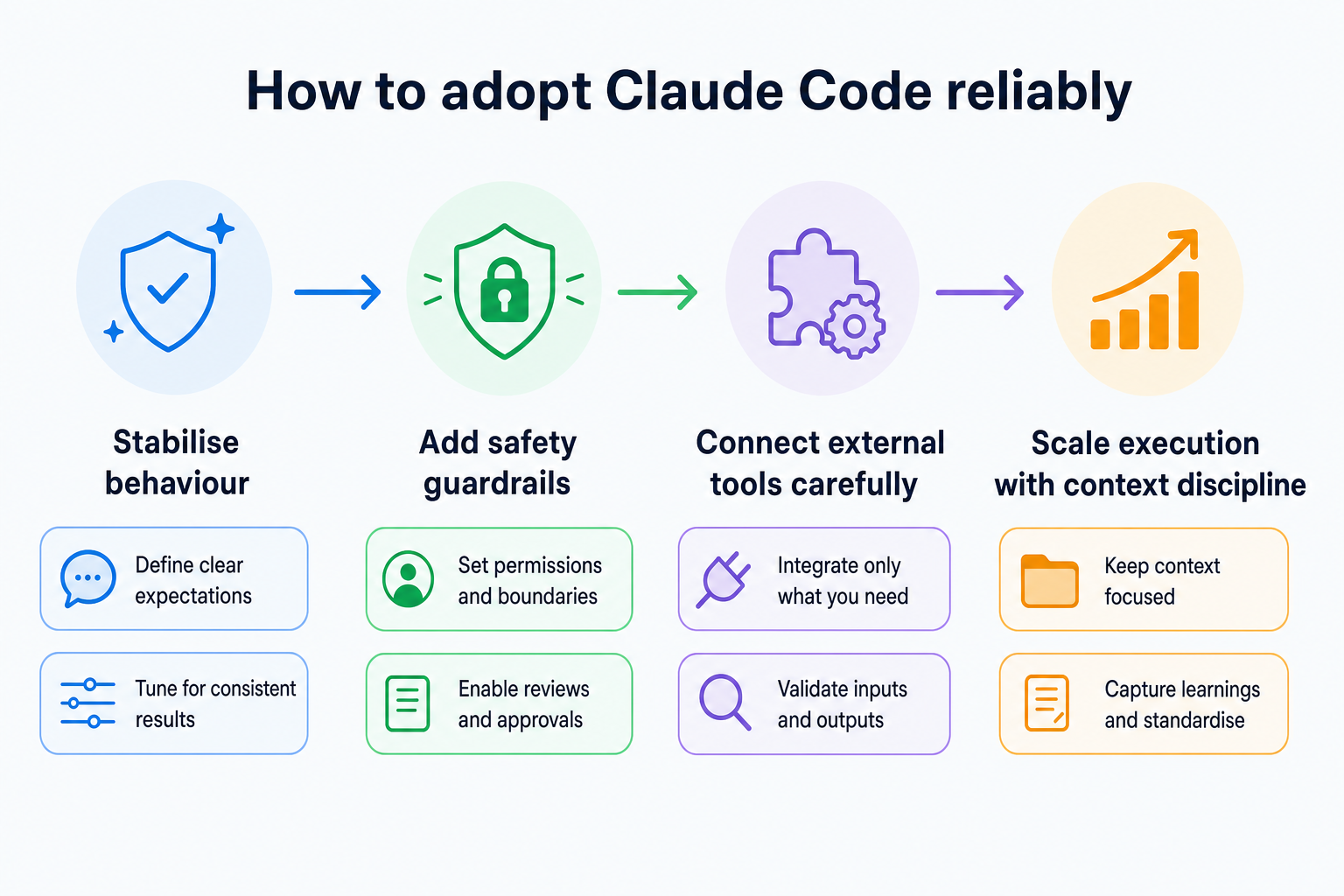

A practical adoption path for mixed-skill teams

- Start by instrumenting rollouts so failures are readable, not just scored.

- Run a prompt-evolution loop on one clearly scoped module and measure gains.

- Track rollout-efficiency alongside quality metrics, not just final accuracy.

- Escalate to RL/fine-tuning only when evidence shows a real capability ceiling.

This staged approach keeps optimisation grounded in evidence instead of ideology.